The Evolution of Neural Networks

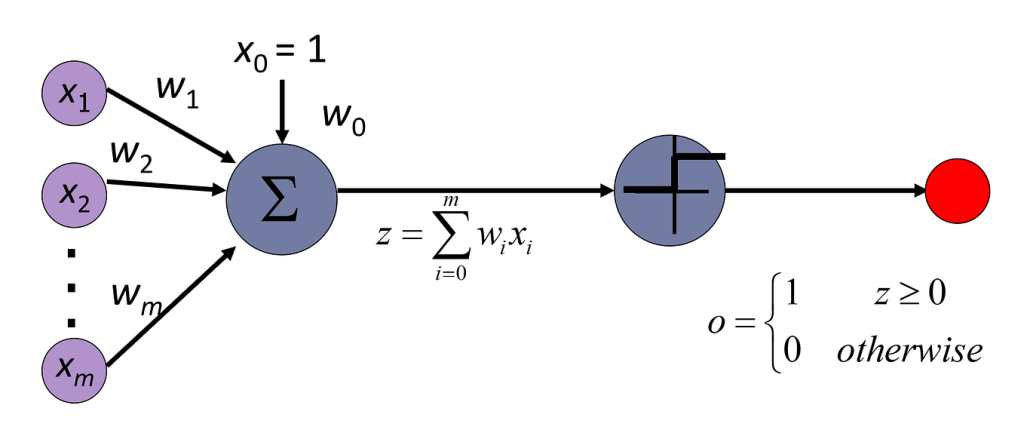

Since the inception of the Perceptron in 1958, the journey of neural networks has been characterized by innovation, resilience, and profound impact. The Perceptron, designed by Frank Rosenblatt, served as the first step toward machine learning, capable of performing basic binary classification tasks. This simple model utilized a single layer of output nodes, creating a foundation for more complex systems.

Fast forward to 1986, and interest in neural networks was revitalized with the introduction of the backpropagation algorithm by Geoffrey Hinton and his colleagues. This breakthrough allowed networks to learn more efficiently by adjusting weights through error minimization across multiple layers. Backpropagation enabled deeper networks to be trained, laying the groundwork for what would later become known as deep learning.

The next significant leap came with 2012 when the world witnessed a remarkable performance by AlexNet in the ImageNet Large Scale Visual Recognition Challenge. AlexNet demonstrated how deep convolutional neural networks (CNNs) could outperform traditional methods in image classification, achieving a top-5 error rate of just 15.3%, significantly more accurate than its predecessors. This success not only validated the potential of neural networks but also spurred numerous advancements across various fields.

The influence of neural networks extends far beyond academia and into vital industries, shaping the future of technology. In healthcare, for instance, neural networks are used to analyze medical images, aid in early disease detection, and even predict patient admissions based on historical data. Companies like Zebra Medical Vision utilize deep learning algorithms to interpret X-rays, CT scans, and MRIs, leading to quicker and more accurate diagnostics.

In the finance sector, businesses use neural networks for tasks such as fraud detection, risk assessment, and algorithmic trading. High-frequency trading firms harness the capabilities of deep learning to identify patterns in stock market data, allowing them to make trades at lightning speed. Similarly, banks employ machine learning models to flag unusual account activity, enhancing security for customers.

Moreover, the rise of autonomous vehicles has showcased the critical role deep learning plays in perception and decision-making systems. Companies like Tesla leverage neural networks to process vast amounts of real-time sensor data, enabling their cars to navigate complex environments independently. This intersection of artificial intelligence and automotive technology is transforming transportation as we know it.

The evolution of neural networks is driven by a combination of advancements in computational power, the availability of big data, and innovative algorithmic strategies. As we continue to explore this fascinating field, it becomes increasingly important to acknowledge the vast complexities, ethical considerations, and potential future developments of neural networks. Recognizing their history not only underscores their significance but also encourages additional inquiry into the limitless possibilities that lie ahead.

EXPLORE MORE: Click here for additional insights

The Foundations of Neural Networks

The early years of neural networks were largely defined by the limitations of the technology and the theoretical understanding at the time. Following the creation of the Perceptron, researchers in the 1960s began exploring the capabilities of multiple neuron-like structures, albeit with modest success. The concept was promising, but the computing power and data sets available were not yet sophisticated enough to support more advanced architectures.

This period saw a decline in enthusiasm for neural networks, often referred to as the “AI winter.” However, researchers like David Rumelhart, Geoffrey Hinton, and Ronald J. Williams reignited interest by introducing the backpropagation algorithm. By allowing the networks to learn from mistakes and optimize weights during training, backpropagation became a game-changer. It paved the way for the development of deep neural networks, consisting of multiple hidden layers capable of complex representations.

A major turning point came in the early 2000s when researchers began to experiment with stacking layers of neurons, ultimately leading to the emergence of deep learning. The advancements in graphics processing units (GPUs) significantly accelerated the computation process, allowing for the training of larger and deeper networks than ever before. This marked the birth of deep learning in earnest.

Key Developments in Neural Network Architecture

As the field grew, so did the complexity of neural network architectures. Some pivotal innovations include:

- Convolutional Neural Networks (CNNs): Designed primarily for image processing tasks, CNNs leverage convolutional layers to capture spatial hierarchies in images. Their success in computer vision applications built a strong case for deep learning.

- Recurrent Neural Networks (RNNs): Employed for sequential data analysis, such as language modeling and time series prediction, RNNs enabled the processing of sequences, leading to applications in natural language processing.

- Generative Adversarial Networks (GANs): Introduced in 2014, GANs revolutionized the creative aspect of machine learning by enabling machines to generate realistic media, from images to music, through adversarial training.

The growing capabilities of neural networks led to an explosion of applications across diverse sectors. In the realm of entertainment, Netflix employs recommendation algorithms based on user preferences, utilizing deep learning to enhance user experiences. In the retail industry, companies like Amazon harness neural networks in their recommendation engines to predict customer purchases, optimizing sales and enhancing customer satisfaction.

Every leap forward in neural network architecture sparked new debates regarding ethics and accountability. As models became increasingly sophisticated, the risks associated with biases in training data and the implications of automated decision-making came to the forefront. Understanding these unintended consequences is vital as society moves toward an AI-integrated future.

The progress from the Perceptron to modern deep learning represents a journey marked by transformative ideas and rigorous experimentation. As we look to the future, this narrative continues to evolve, unveiling new opportunities—and challenges—for harnessing the computational power of neural networks.

As we continue to explore the topic of “The Evolution of Neural Networks: From Perceptron to Deep Learning”, it’s essential to understand the transformative milestones that have shaped the landscape of artificial intelligence (AI). The journey begins with the basic Perceptron, introduced by Frank Rosenblatt in 1958, which laid the foundation for neural network architecture. This simplistic model allowed for binary classification tasks, essentially offering a glimpse into the capabilities of machine learning.However, the limitations of the Perceptron soon became apparent, especially when dealing with non-linear problems. This led to significant advancements in neural networks during the 1980s, primarily through the introduction of concepts like backpropagation and multilayer networks, expanding the functionality and applications of artificial neural networks. The ability to train deeper networks meant that complexity and accuracy in tasks like image and speech recognition could be vastly improved, setting the stage for modern AI.The introduction of Convolutional Neural Networks (CNNs) in the early 1990s revolutionized how machines interpret visual data, allowing for remarkable advancements in computer vision. Subsequent innovations, such as Recurrent Neural Networks (RNNs), provided a framework for handling sequential data, crucial for tasks like natural language processing. With the rise of big data and increased computational power, the inception of deep learning in the 2010s marked a critical point in neural network evolution. Deep learning leverages more complex architectures, enabling systems to learn hierarchical features directly from the data, significantly enhancing performance in diverse applications.As we delve deeper into these advancements, let’s highlight a table summarizing the core advantages and features that have emerged throughout this evolution.

| Category | Key Features |

|---|---|

| Neural Network Flexibility | Adaptable architecture for various tasks including classification and regression. |

| Advanced Learning Techniques | Incorporation of layered learning and backpropagation increases accuracy and efficiency. |

| Data Processing Capability | Ability to handle large datasets, improving model training and outcomes. |

| Multimodal Functionality | Integration of sound, image, and text processing allows for comprehensive AI tasks. |

In exploring these features, one can see how each advancement has contributed to more sophisticated systems that are now pervasive in our daily lives. As such, understanding this trajectory not only provides context for current technologies but also inspires future innovations within the field of artificial intelligence.

DISCOVER MORE: Click here for further insights

The Rise of Advanced Neural Network Techniques

With the establishment of sophisticated architectures like Convolutional Neural Networks (CNNs) and Recurrent Neural Networks (RNNs), the growth of neural networks accelerated uniformly across various domains. Research teams worldwide began to exploit these advancements for real-world applications, leading to groundbreaking results in fields beyond mere academic theory. For instance, CNNs not only transformed the handling of image classification tasks but also ushered in advancements in medical imaging. Hospitals now utilize AI-derived diagnostics powered by CNNs to analyze X-rays, MRIs, and CT scans with remarkable accuracy, often surpassing human specialists.

RNNs, on the other hand, marked a significant development in the realm of natural language processing (NLP). By allowing data to be fed sequentially, these networks excelled at understanding context, which is crucial for tasks such as machine translation and sentiment analysis. Companies like Google and Facebook leveraged these architectures to enhance user interactions, enabling voice recognition and real-time translation applications.

Transformational Innovations in Deep Learning

The field did not stop evolving. The introduction of techniques such as Long Short-Term Memory (LSTM) networks and Attention Mechanisms brought further leaps. LSTM networks addressed limitations faced by standard RNNs, particularly the problem of vanishing gradients, by enabling models to learn over longer time spans. This advancement facilitated developments like predictive text features on smartphones, drastically improving the responsiveness and accuracy of our devices.

Attention mechanisms, which underlie models like the Transformer architecture, revolutionized how sequential data is processed. This paradigm shift allowed models to focus on relevant parts of input data when generating responses. As a result, the capabilities of AI in tasks ranging from chatbots to automated content generation soared, leading to commercial success for companies investing in these technologies. For instance, OpenAI’s language models demonstrate significant improvements in text-based tasks thanks to attention mechanisms, providing coherent and contextually aware outputs.

Ethical Considerations in Neural Network Development

As neural networks delve deeper into our lives, ethical questions loom larger. The complexity of models often renders them opaque, creating concerns about bias and accountability. Instances of biased algorithms can lead to unjust outcomes, affecting sectors like hiring practices or criminal justice. Recognizing these potential pitfalls has prompted researchers and practitioners in the AI field to advocate for improved transparency and ethical considerations in training models, guiding the evolution of neural networks responsibly.

The past decade has witnessed not only rapid advancements in architecture but also a surge in public interest regarding ethical AI. Initiatives and principles focused on fairness, accountability, and transparency have emerged, aiming to mitigate the unintended consequences that accompany the deployment of advanced technologies. Engaging in dialogues about AI’s societal implications has become essential, ensuring that as we harness the computational power of neural networks, we do so with a keen awareness of our responsibilities.

Undoubtedly, the evolution of neural networks—from the humble Perceptron to the sophisticated layers of deep learning—highlights a captivating narrative of innovation that continually reshapes our understanding of AI. Challenges and opportunities beckon as the field progresses, pushing the boundaries of what machines can achieve. Each evolution invites us to explore both the wonders and the ethical dilemmas that are intrinsic to this technological journey.

DON’T MISS OUT: Click here to discover more insights

Conclusion: A Journey Through the Evolution of Neural Networks

The journey of neural networks from the basic Perceptron to the complex architectures of deep learning encapsulates a remarkable era of technological advancement. The metamorphosis has been characterized by innovative strides that have not only enhanced computational capabilities but have also revolutionized various sectors including healthcare, finance, and technology. The advent of Convolutional Neural Networks (CNNs) and Recurrent Neural Networks (RNNs) brought about seismic shifts in image processing and natural language understanding, paving the way for transformative applications such as medical diagnostics and real-time translation services.

Moreover, the introductory techniques of Long Short-Term Memory (LSTM) networks and attention mechanisms have ushered in unprecedented engagement with AI applications. These advancements refine how machines comprehend and react to data, signifying a maturation of intelligence within software systems. Nevertheless, alongside the promising developments comes the critical need for responsible AI practice. As neural networks integrate more deeply into our daily lives, the dialogue surrounding ethical accountability and bias management grows more imperative. Ensuring fairness and transparency in AI systems will be crucial in building trust and harnessing neural networks for societal benefit.

As we reflect on this evolution, it is clear that the future of neural networks holds even more promise. While challenges will undoubtedly persist, the commitment of researchers and industries to push forward responsibly will forge a path toward even greater innovations. The narrative of neural networks illustrates the balancing act of technological progress and ethical considerations, reminding us of our shared responsibility in shaping a future where AI serves humanity’s best interests.

Related posts:

Neural Networks for Predictive Analysis in Finance and Investments

Neural Networks in Natural Language Processing: Advances and Challenges

Recurrent Neural Networks: Applications in Natural Language Processing

Ethical and Transparency Challenges in Neural Networks for AI Applications

The Evolution of Neural Networks: From Perceptrons to Deep Learning Models

Convolutional Neural Networks: Transforming Computer Vision

Beatriz Johnson is a seasoned AI strategist and writer with a passion for simplifying the complexities of artificial intelligence and machine learning. With over a decade of experience in the tech industry, she specializes in topics like generative AI, automation tools, and emerging AI trends. Through her work on our website, Beatriz empowers readers to make informed decisions about adopting AI technologies and stay ahead in the rapidly evolving digital landscape.